Data Analytics

Made Simple.

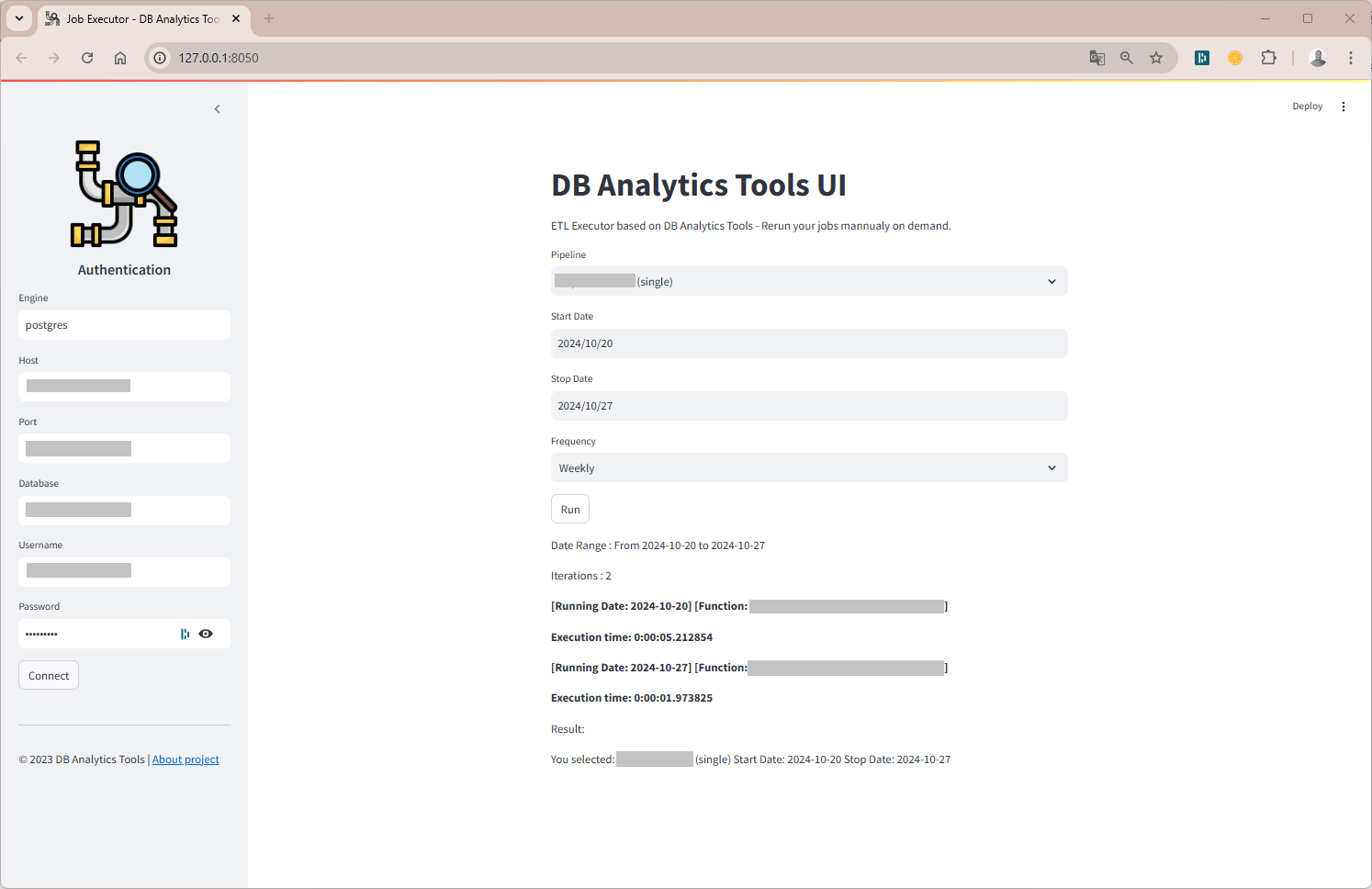

A lightweight Python framework to bridge the gap between complex data warehouses and actionable analytics. Open-source, modular, and fast.

pip install db-analytics-tools